Why Claude Code for Terraform

Writing Terraform is repetitive. VPC modules, IAM policies, RDS configurations, S3 bucket policies — the patterns are similar across projects but the details change every time. Copy-pasting from old projects introduces drift. Writing from scratch is slow.

Claude Code changes this workflow. Instead of writing Terraform line by line, you describe what you need in plain English and get a production-ready module back. Not a rough draft that needs heavy editing — an actual working module with variables, outputs, tags, and security defaults.

After using Claude Code daily for Terraform and IaC generation across multiple client projects, it has become the fastest way to scaffold infrastructure. This guide covers the exact prompts, patterns, and review process that work in practice.

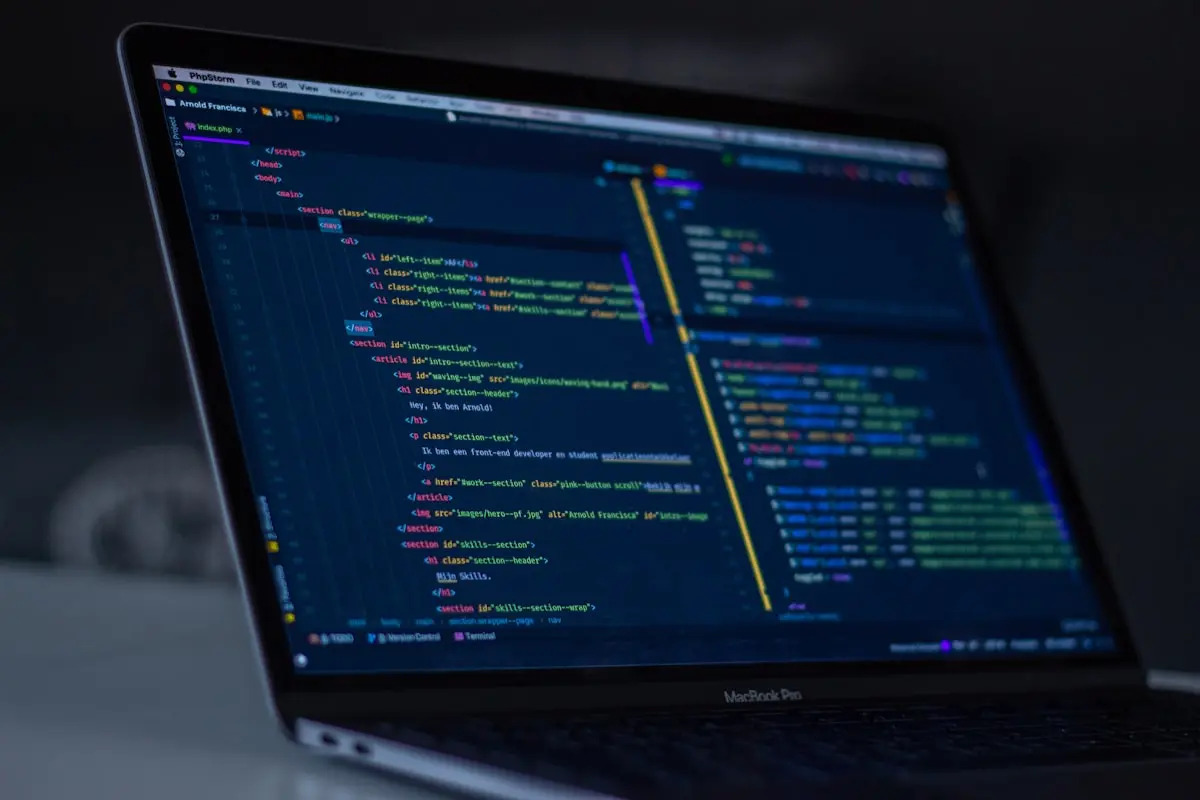

Claude Code generates complete Terraform modules from natural language descriptions

Claude Code generates complete Terraform modules from natural language descriptions

Setting Up Claude Code for Terraform Work

If you have not installed Claude Code yet, follow the complete setup guide. For Terraform-specific work, a few additional configurations help:

Create a CLAUDE.md file in your Terraform project root:

# Project Context

- Cloud provider: AWS

- Terraform version: >= 1.5

- Provider version: hashicorp/aws ~> 5.0

- Backend: S3 with DynamoDB locking

- Naming convention: {project}-{environment}-{resource}

- All resources must have tags: Environment, Project, ManagedBy

- Use variables for everything configurable

- Include outputs for commonly referenced attributesThis context file tells Claude Code your project conventions upfront, so every generated module follows your standards automatically.

Prompt Pattern 1: VPC Module

The prompt:

Create a Terraform module for an AWS VPC with:

- CIDR configurable via variable, default 10.0.0.0/16

- 3 public subnets and 3 private subnets across AZs

- NAT Gateway in one AZ (cost-optimized, not HA)

- Internet Gateway

- Route tables for public and private subnets

- VPC Flow Logs to CloudWatch

- All standard tagsWhat Claude Code generates:

variable "vpc_cidr" {

description = "CIDR block for the VPC"

type = string

default = "10.0.0.0/16"

}

variable "project" {

description = "Project name for resource naming and tagging"

type = string

}

variable "environment" {

description = "Environment name (dev, staging, prod)"

type = string

}

variable "availability_zones" {

description = "List of availability zones"

type = list(string)

default = ["us-east-1a", "us-east-1b", "us-east-1c"]

}

locals {

name_prefix = "${var.project}-${var.environment}"

common_tags = {

Project = var.project

Environment = var.environment

ManagedBy = "terraform"

}

}

resource "aws_vpc" "main" {

cidr_block = var.vpc_cidr

enable_dns_hostnames = true

enable_dns_support = true

tags = merge(local.common_tags, {

Name = "${local.name_prefix}-vpc"

})

}

resource "aws_subnet" "public" {

count = length(var.availability_zones)

vpc_id = aws_vpc.main.id

cidr_block = cidrsubnet(var.vpc_cidr, 4, count.index)

availability_zone = var.availability_zones[count.index]

map_public_ip_on_launch = true

tags = merge(local.common_tags, {

Name = "${local.name_prefix}-public-${var.availability_zones[count.index]}"

Tier = "public"

})

}

resource "aws_subnet" "private" {

count = length(var.availability_zones)

vpc_id = aws_vpc.main.id

cidr_block = cidrsubnet(var.vpc_cidr, 4, count.index + length(var.availability_zones))

availability_zone = var.availability_zones[count.index]

tags = merge(local.common_tags, {

Name = "${local.name_prefix}-private-${var.availability_zones[count.index]}"

Tier = "private"

})

}

resource "aws_internet_gateway" "main" {

vpc_id = aws_vpc.main.id

tags = merge(local.common_tags, {

Name = "${local.name_prefix}-igw"

})

}

resource "aws_eip" "nat" {

domain = "vpc"

tags = merge(local.common_tags, {

Name = "${local.name_prefix}-nat-eip"

})

}

resource "aws_nat_gateway" "main" {

allocation_id = aws_eip.nat.id

subnet_id = aws_subnet.public[0].id

tags = merge(local.common_tags, {

Name = "${local.name_prefix}-nat"

})

depends_on = [aws_internet_gateway.main]

}This is approximately 60% of a real VPC module — generated in seconds. Claude Code also generates the route tables, flow logs, and outputs that complete the module.

Prompt Pattern 2: RDS with Security

The prompt:

Create a Terraform config for RDS PostgreSQL with:

- db.t3.medium instance

- Multi-AZ for production, single-AZ for dev

- Encrypted storage with default KMS key

- Automated backups, 7 day retention

- Not publicly accessible

- Security group allowing ingress only from private subnets

- Parameter group with log_statement = 'all' for dev

- Skip final snapshot in dev, require in prodClaude Code handles the conditional logic between environments cleanly:

resource "aws_db_instance" "main" {

identifier = "${local.name_prefix}-postgres"

engine = "postgres"

engine_version = var.postgres_version

instance_class = var.instance_class

allocated_storage = var.allocated_storage

max_allocated_storage = var.max_allocated_storage

storage_encrypted = true

db_name = var.database_name

username = var.master_username

password = var.master_password

multi_az = var.environment == "prod"

publicly_accessible = false

vpc_security_group_ids = [aws_security_group.rds.id]

db_subnet_group_name = aws_db_subnet_group.main.name

parameter_group_name = aws_db_parameter_group.main.name

backup_retention_period = 7

backup_window = "03:00-04:00"

maintenance_window = "Mon:04:00-Mon:05:00"

skip_final_snapshot = var.environment != "prod"

final_snapshot_identifier = var.environment == "prod" ? "${local.name_prefix}-final-snapshot" : null

tags = merge(local.common_tags, {

Name = "${local.name_prefix}-postgres"

})

}The environment-conditional multi_az and skip_final_snapshot logic is exactly what you would write manually — but generated in seconds.

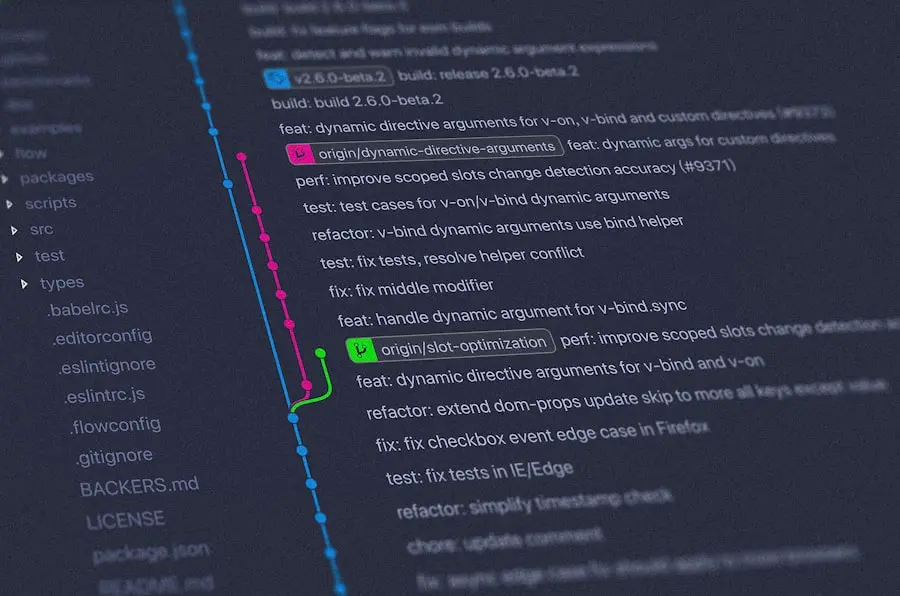

Claude Code integrates into your existing git workflow — generate, review, commit

Claude Code integrates into your existing git workflow — generate, review, commit

Prompt Pattern 3: IAM Roles with Least Privilege

IAM is where AI-generated Terraform needs the most careful review. The prompt:

Create an IAM role for an ECS task that needs to:

- Read from a specific S3 bucket (variable)

- Write to CloudWatch Logs

- Read secrets from Secrets Manager with a specific prefix

- Pull images from ECR

Use least privilege. No wildcards on resources.Claude Code generates properly scoped policies:

data "aws_iam_policy_document" "ecs_task" {

statement {

sid = "S3ReadAccess"

effect = "Allow"

actions = [

"s3:GetObject",

"s3:ListBucket"

]

resources = [

var.s3_bucket_arn,

"${var.s3_bucket_arn}/*"

]

}

statement {

sid = "CloudWatchLogs"

effect = "Allow"

actions = [

"logs:CreateLogStream",

"logs:PutLogEvents"

]

resources = [

"${aws_cloudwatch_log_group.app.arn}:*"

]

}

statement {

sid = "SecretsManagerRead"

effect = "Allow"

actions = [

"secretsmanager:GetSecretValue"

]

resources = [

"arn:aws:secretsmanager:${data.aws_region.current.name}:${data.aws_caller_identity.current.account_id}:secret:${var.project}/${var.environment}/*"

]

}

}No * on resources. Scoped to specific ARNs. This is better than what many engineers write manually under time pressure.

Prompts That Produce Bad Output

Not every prompt works well. These patterns consistently produce mediocre Terraform:

Too vague:

# Bad: "Create AWS infrastructure for a web app"

# Claude Code will make too many assumptions about architectureToo broad:

# Bad: "Create the entire infrastructure for a microservices platform"

# Output will be shallow — many resources, none configured wellMissing constraints:

# Bad: "Create an S3 bucket"

# Without specifying encryption, versioning, lifecycle, access —

# you get a bucket with defaults, which is rarely what production needsWhat works: Specific resources with explicit requirements. One module per prompt. State the constraints you care about.

The Review Process

AI-generated Terraform should never go straight to terraform apply. The review process:

1. Validate Syntax

terraform fmt -check

terraform validate2. Check the Plan

terraform plan -out=plan.tfplanRead the plan output carefully. Common issues Claude Code produces:

- Hardcoded regions — check for

us-east-1when you need a variable - Missing lifecycle blocks — databases and stateful resources often need

prevent_destroy - Default security group rules — sometimes generates overly permissive egress rules

- Provider version constraints — may use features from newer provider versions than your lock file

3. Security Scan

# Using tfsec

tfsec .

# Or checkov

checkov -d .These catch common security misconfigurations — public S3 buckets, unencrypted resources, overly permissive IAM policies.

4. Cost Check

infracost breakdown --path .AI-generated infrastructure sometimes defaults to larger instance types than necessary. Always verify the cost before applying.

Claude Code fits into the standard IaC workflow — generate, validate, plan, apply

Claude Code fits into the standard IaC workflow — generate, validate, plan, apply

Claude Code vs ChatGPT for Terraform

After using both extensively for infrastructure work, here is how they compare. A detailed comparison covers more areas, but for Terraform specifically:

| Claude Code | ChatGPT | |

|---|---|---|

| Project context awareness | Reads your codebase directly | Copy-paste only |

| Module consistency | Follows your naming conventions | Generic defaults |

| Multi-file generation | Creates variables.tf, outputs.tf, etc. | Usually one block |

| Iterative refinement | Edit in place, re-run plan | Start over each time |

| State awareness | Can read existing .tf files | No context |

The biggest advantage is context. Claude Code reads your existing Terraform files, understands your naming patterns, and generates modules that match — not generic templates that need heavy editing.

Advanced: Generating Complete Module Structures

For a complete module, use this prompt pattern:

Create a Terraform module in modules/rds/ with:

- main.tf — RDS instance, subnet group, parameter group

- variables.tf — all input variables with descriptions and types

- outputs.tf — instance endpoint, port, database name, security group ID

- security.tf — security group with configurable ingress CIDR

Requirements:

[list your specific requirements]Claude Code creates the entire directory structure with properly separated files — matching how real Terraform projects are organized.

Key Takeaways

- Claude Code generates production-quality Terraform modules from natural language prompts

- Add a

CLAUDE.mdfile with your project conventions for consistent output - Be specific in prompts — state exact requirements, constraints, and resource configurations

- Never skip the review process — validate, plan, security scan, and cost check before applying

- Claude Code’s context awareness (reading your existing codebase) is its biggest advantage over ChatGPT

- IAM policies need the most careful review — verify least privilege manually

- Use one-module-per-prompt for best results, not entire infrastructure in one shot

FAQ

Does Claude Code write production-ready Terraform?

It writes 80-90% production-ready code. The remaining 10-20% requires human review — checking for hardcoded values, verifying security configurations, and ensuring the module fits your specific architecture. For standard AWS resources (VPC, RDS, S3, IAM), the output is consistently good. For complex patterns (cross-account access, custom providers), more editing is needed.

How does Claude Code handle Terraform state?

Claude Code does not interact with Terraform state directly. It generates or modifies .tf files. You run terraform plan and terraform apply yourself. This is the correct approach — AI should generate code, not execute infrastructure changes.

Can Claude Code refactor existing Terraform?

Yes. Point it at an existing module and ask it to refactor — extract variables, add outputs, split into multiple files, or convert from resource blocks to modules. Since it reads your codebase, it understands the existing structure before making changes.

What Terraform version does Claude Code target?

Claude Code generates Terraform compatible with version 1.5+. If you need older version compatibility, specify it in your prompt or CLAUDE.md file. It handles HCL2 syntax, for_each, dynamic blocks, and modern provider configuration correctly.

Is it worth using for small Terraform changes?

For single-resource changes (add a tag, modify a variable default), editing manually is faster. Claude Code shines on module creation, refactoring, and multi-resource configurations where the boilerplate adds up. If the change takes more than 5 minutes to write manually, Claude Code is faster.

Conclusion

Claude Code has fundamentally changed how fast Terraform modules get written. The combination of natural language prompts, project context awareness, and iterative refinement means infrastructure that used to take an hour to scaffold now takes minutes.

The key is treating it as a first draft — a very good first draft that still needs human review. Run terraform plan, run a security scanner, check the cost estimate. The AI writes the code. The engineer verifies the architecture.

Want help setting up Terraform modules for your AWS infrastructure? View our AWS Infrastructure Setup service

Read next: AI Can Write Terraform — Here Are the Prompts That Actually Work